Ivan Hurzeler

Visual Subject Matter Expert | AI Video Evaluation & Ground Truth Curation

I provide the human 'Ground Truth' necessary to bridge the gap between generative video and real-world physics.

With over a decade of experience as an Emmy-nominated Art Director and Documentary Filmmaker, I have captured some of the most complex environments on earth—from the high-velocity dynamics of the Indy 500 to deep-sea submersible deployments with NOAA and Woods Hole Oceanographic Institution. I complement my technical career with directing work selected for competition at the Sundance Film Festival.

My methodology leverages this field experience to audit AI models for the subtle 'fail states' that automated systems miss. By evaluating datasets through the lens of optics, fluid dynamics, and anatomical consistency, I help engineering teams move past the 'uncanny valley' toward mathematically accurate and cinematically coherent video generation.

Project 1:

Famous Person Talent Agency. Role: Director, Short film, Official Selection Sundance Film Festival

Test case 1: Spatial Continuity and Reverse-Shot Logic

Establishing

Reverse

Validation of Environmental Anchor Points:

In this sequence, I compare the spatial relationship between the foreground subject and 'off-screen' practical light sources. I make sure that shadows and reflective surfaces in the Reverse shot align match the space in the Establishing shot. Matching coverage is a primary benchmark for Multi-Shot Coherence in any video.

Test case 2: Complex Contact Physics & Anatomical Occlusion (Fight Sequence)

Fight Sequence

In full contact environment, I evaluate Multi-Body Contact Physics. This shot defines Anatomical Integrity—specifically the points where human limbs intersect with each other and with rigid environmental objects (the desk). I look for 'pixel-melding' and 'hallucinated appendages'.’ I confirm that the model maintains distinct object boundaries and consistent interaction during rapid, unpredictable physical movements.

Speeding Car/Urban Kinematics

I look for appropriate motion blur and constant perspective in high-velocity sequences. A critical failure point in generative video is 'ghosting' or 'smearing' where the subject’s geometry mixes with background textures. I can identify the motion blur consistent with the camera’s shutter angle, and flag temporal artifacts that occur outside of those technical boundaries. I can flag the temporal artifacts that often occur in high speed scenes due to inconsistent representation of shutter angle and speed of motion. In this case, the shot was made with a full frame DSLR and a 180 degree shutter angle that produces consistently recognizable motion blur.

Project 1 video/Ground Truth link: “Famous Person Talent Agency”

Project 2:

Indy Car Documentary. Role: Director, DP

Test case 1: High-Velocity Temporal Stability (Speeding Car)

Spinning tires/High Velocity Stress Test

I look for breaks in physics between wheels, rims, and the ground. In high-velocity tracking shots like this, I decide whether the model maintains the geometric volume of the wheels during high-RPM rotation. I can catch 'rolling shutter' hallucinations and confirm that motion blur vectors align with the vehicle’s ground speed and camera tracking velocity. This evaluation is different for every camera; here the example was shot with a 2/3 inch broadcast model that features very little blur.

Test case 2: Volumetric Physics & Particle Persistence

Crash and spinout/High Velocity Stress Test

I can confirm the physical characteristics of a crash, and volumetric smoke persistence. In high-energy impact sequences, I monitor the interaction between solid bodies (the wall/chassis) and gaseous particulates (tire smoke). I identify 'temporal flickering' in the smoke density and make sure the debris maintains a consistent trajectory from the point of impact, preventing physics-defying particle behavior.

Test case 3: High-Density Occlusion & Equipment Logic

Pit Crew At Work

I evaluate complex mechanical interactions. This test case focuses on the intersection of human limbs with high-detail equipment (fuel hoses, helmet visors, aerodynamic wings). I check for 'pixel-bleeding' at the contact points, ensuring the model maintains distinct object boundaries. I also verify Functional Logic—checking that mechanical tools are gripped and utilized according to real-world physics rather than morphing into the subject's anatomy. Understanding intentionality is key to evaluating the performance of the model. In this case, the scene was shot with an APS-C frame Canon C300, which produces a subtle rolling shutter and a classic 180 degree shutter motion blur. Understanding this camera’s behavior allows me to properly evaluate object boundaries.

Project 2 video/Ground Truth link: “Indy 500 Documentary” Commissioned by Verizon

Project 3:

Feature Documentary “Acid Horizon”. Official Selection Santa Barbara International Film Festival, Distributed by Gravitas Ventures. Role: Director, Producer, Editor, DP

Test case 1: Surface Tension And Mass Displacement

Recovering a submersible

I can correctly judge solid body and fluid Interactions. In this sequence, I note the displacement of the water's surface as the vessel transitions between sea and air. I’m looking at the 'white water' churn and spray vectors to ensure they exhibit realistic mass and gravity response. By understanding all the factors in the scene - the submersible, the ship, the sea, the air and the camera - I can prevent the conditions that produce the strange 'weightless' or 'sliding' artifacts common in generative AI fluid simulations.

Test case 2: Rigid Body Contact and Particulate Plumes

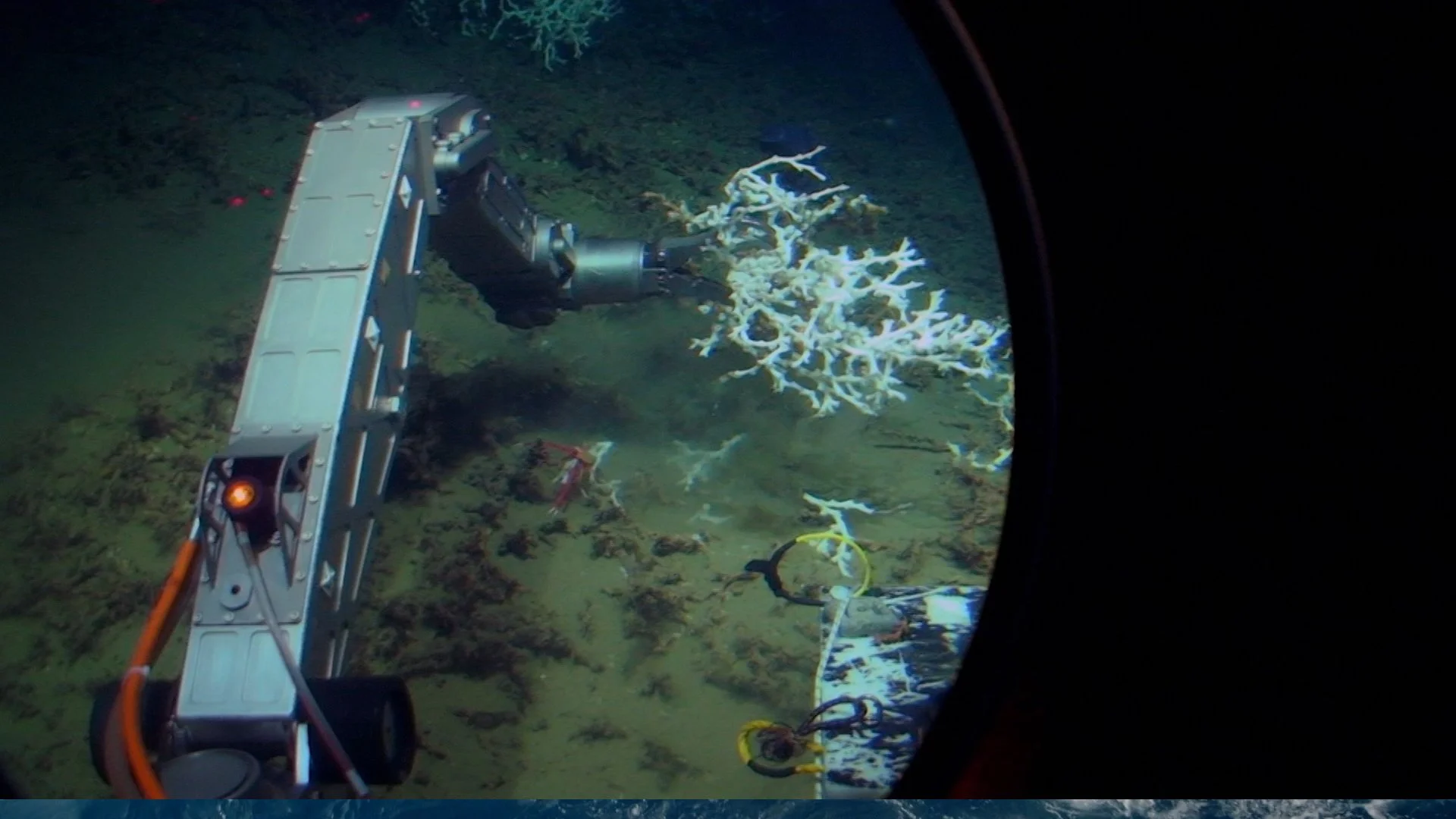

Manipulator Arm Taking Coral Sample

Test case 3: Macro Detail and Particle Consistency

I evaluate contact physics and secondary fluid effects. In this sequence, I examine the intersection between a high-torque, submersible mounted manipulator and the seabed. I’m making sure the resulting sediment plume reacts to water resistance correctly. I look for 'weightless' artifacts where the sand might move too fast (like it's in air); instead, I ensure the plume exhibits the heavy, slow-moving behavior of a high-density liquid environment. This provides a ground-truth benchmark for how objects and particles should interact on the ocean floor. This scene has the added complexity of a handheld POV shot from inside of a submersible. Understanding that context is crucial to judging fidelity of an image like this.

Close up of Lophelia pertusa in situ

I evaluate High-Frequency Detail and Particle Stability in macro environments. In this sequence, I audit the 'marine snow'—the suspended sediment in the water—to ensure each particle maintains a consistent path without flickering or disappearing. I also monitor the fine textures of the coral to prevent 'pixel-bleeding,' ensuring the model maintains sharp object boundaries even during subtle camera drifts. This serves as a ground-truth benchmark for how generative models should handle complex organic textures in a liquid medium.

To calibrate AI models effectively, an auditor must understand not just the pixel, but the physics of the capture.

Technical Stack & Field Experience:

Precision Imaging: RED Digital Cinema, Arri Alexa, and high-speed motion systems used in professional racing and narrative features.

Extreme Environment Optics: Specialized sub-aquatic housings and optics for deep-sea research (Alvin Submersible / EV Nautilus). Experienced free diver.

Technical QC & Post-Production: DaVinci Resolve (Color Science & Optical Flow), Unreal Engine (Virtual Production), and CVAT (Annotation).

Core SME Domains: Temporal Consistency, Multi-Phase Fluid Dynamics, Water Resistance Logic, and High-Friction Occlusion.

Project 3 video/Ground Truth link: Feature Documentary “Acid Horizon”

Acid Horizon